Mint is a full-stack fintech platform built across 12 independently deployable services. It covers the full lifecycle of a digital wallet - from identity verification and fraud scoring to social payments, budget analytics, and a tamper-proof audit log - all wired together through synchronous gRPC and asynchronous Kafka.

Overview

The platform is polyglot by design: auth and wallet are FastAPI (Python), everything else is NestJS (TypeScript), and the frontend is Next.js 15. Services communicate synchronously over gRPC when a result is needed before proceeding (fraud scoring, KYC limit checks, wallet settlement), and asynchronously over Kafka for fan-out side effects (notifications, analytics, audit logging, webhooks). Every service has its own isolated PostgreSQL database.

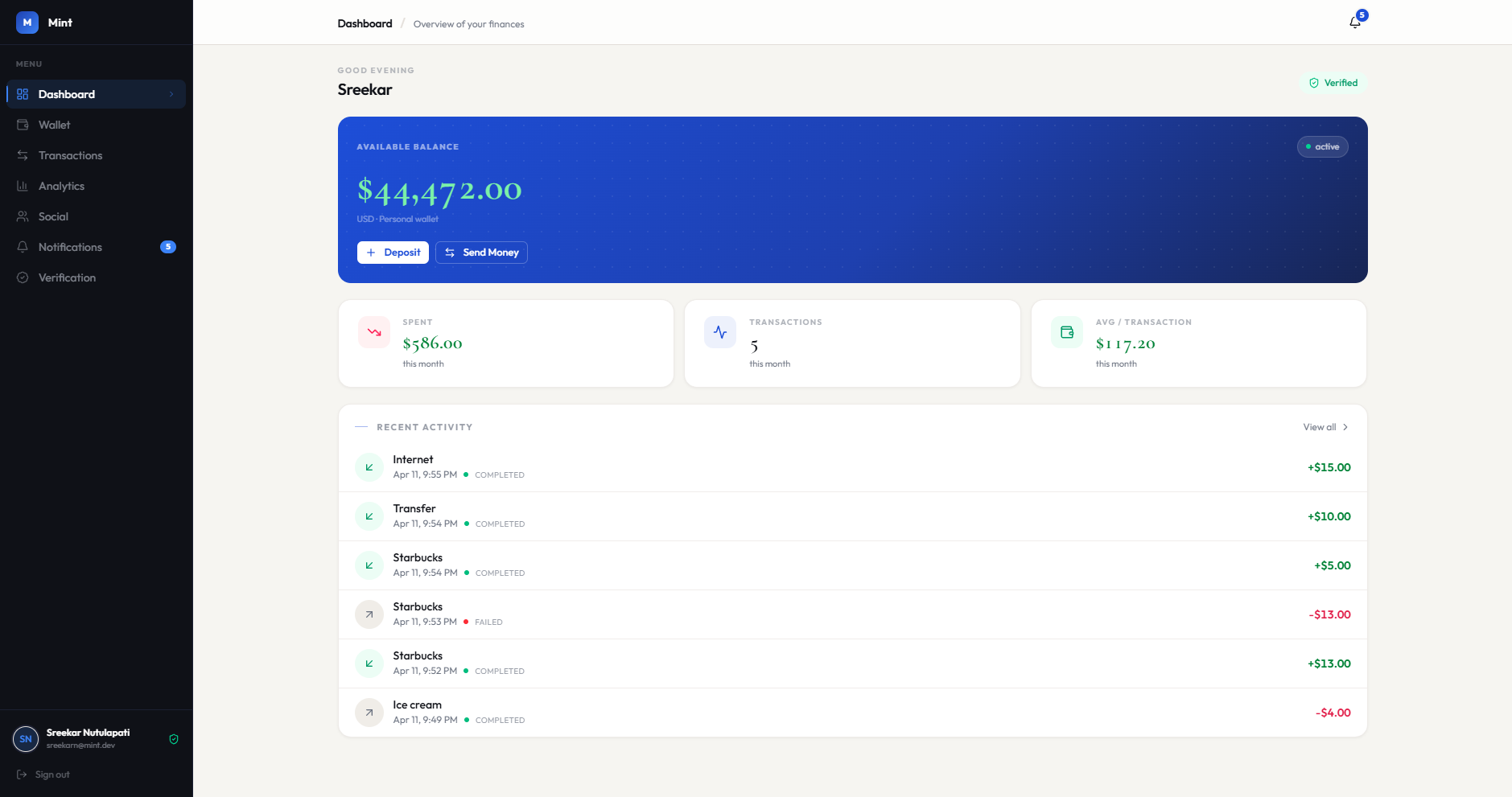

Key Features

Authentication & Identity

- JWT (RS256) with JWKS - Auth service signs tokens with an RSA private key; all other services verify locally against the public JWKS endpoint, no round-trips required

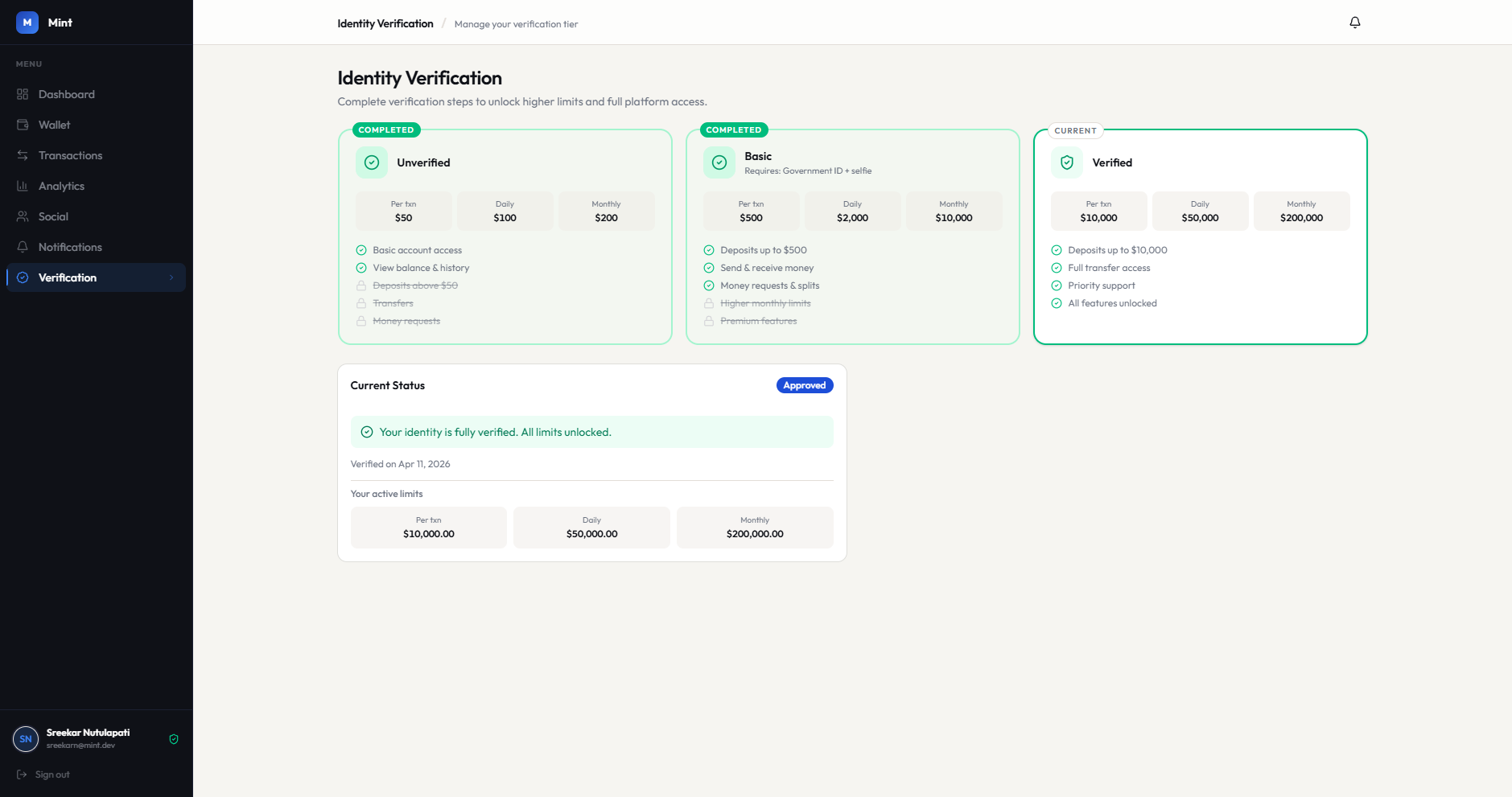

- KYC Tiers - Users progress through UNVERIFIED -> BASIC -> VERIFIED; each tier unlocks higher per-transaction and monthly spend limits

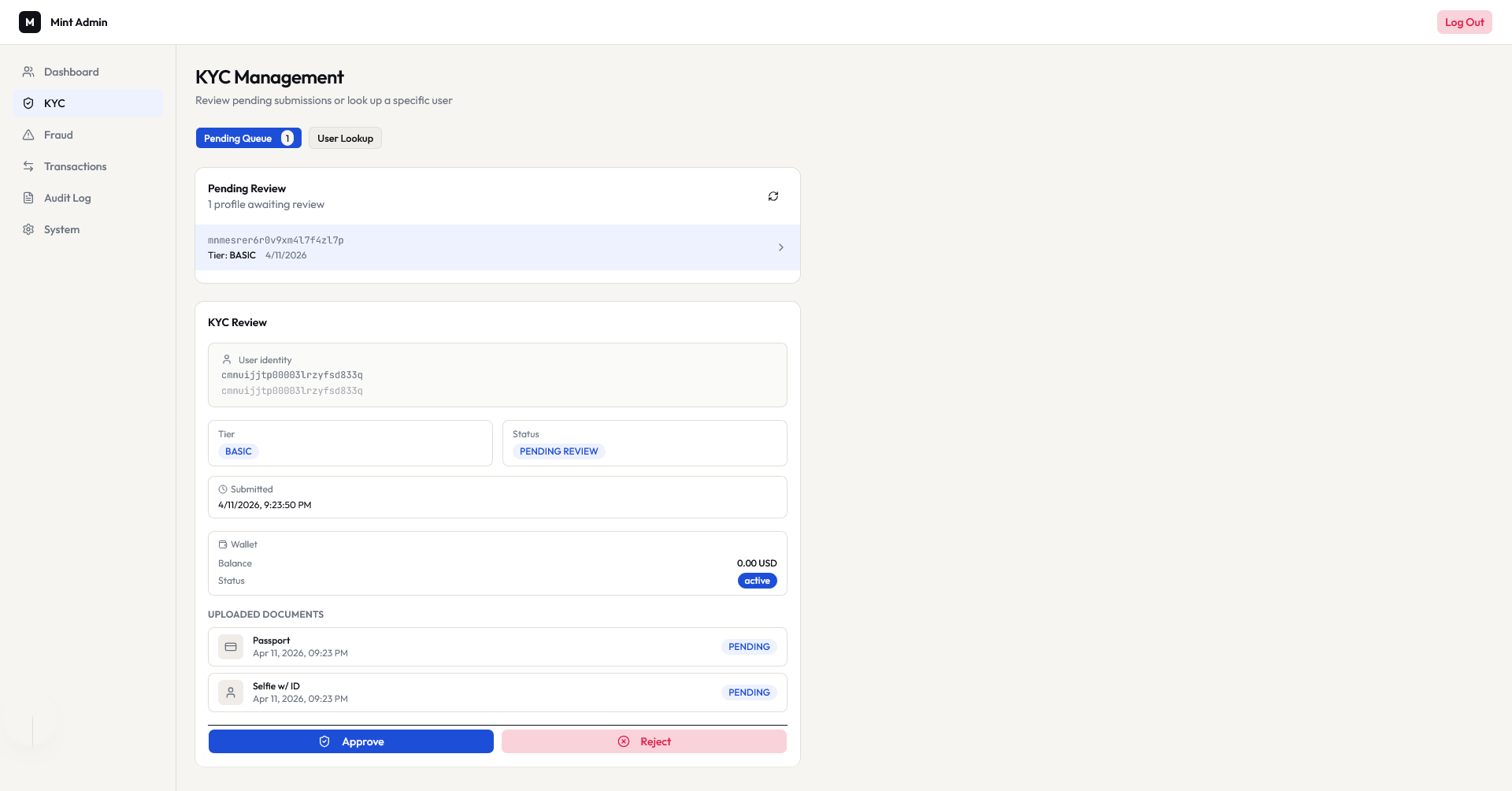

- Document Upload - KYC submissions are stored in MinIO (S3-compatible); admins review and approve/reject via a dedicated queue

- Admin Role - Separate JWT claim gates the admin console; admin service enforces it on every request

Transaction Engine

- Fraud Scoring - Every transaction calls the fraud service via gRPC before settlement. Returns ALLOW / REVIEW / BLOCK with a 0-100 score and the rules that fired

- KYC Limit Enforcement - Transactions service calls KYC service via gRPC to check per-transaction and daily/monthly limits before debiting

- Atomic Settlement - Wallet debit and credit happen over gRPC; both must succeed or the transaction fails

- State Machine - PENDING -> PROCESSING -> COMPLETED / FAILED / CANCELLED / REVERSED

- Idempotency -

Idempotency-Keyheader; responses cached in Redis for 24 hours so retries are safe

Social Payments

- Money Requests - Request funds from contacts with a 7-day expiry (BullMQ scheduled job). Accepting a request automatically triggers a transfer via Kafka -> transactions service

- Bill Splits - Create a split, assign per-participant amounts, track individual payments

- Contacts - Managed contact list scoped per user

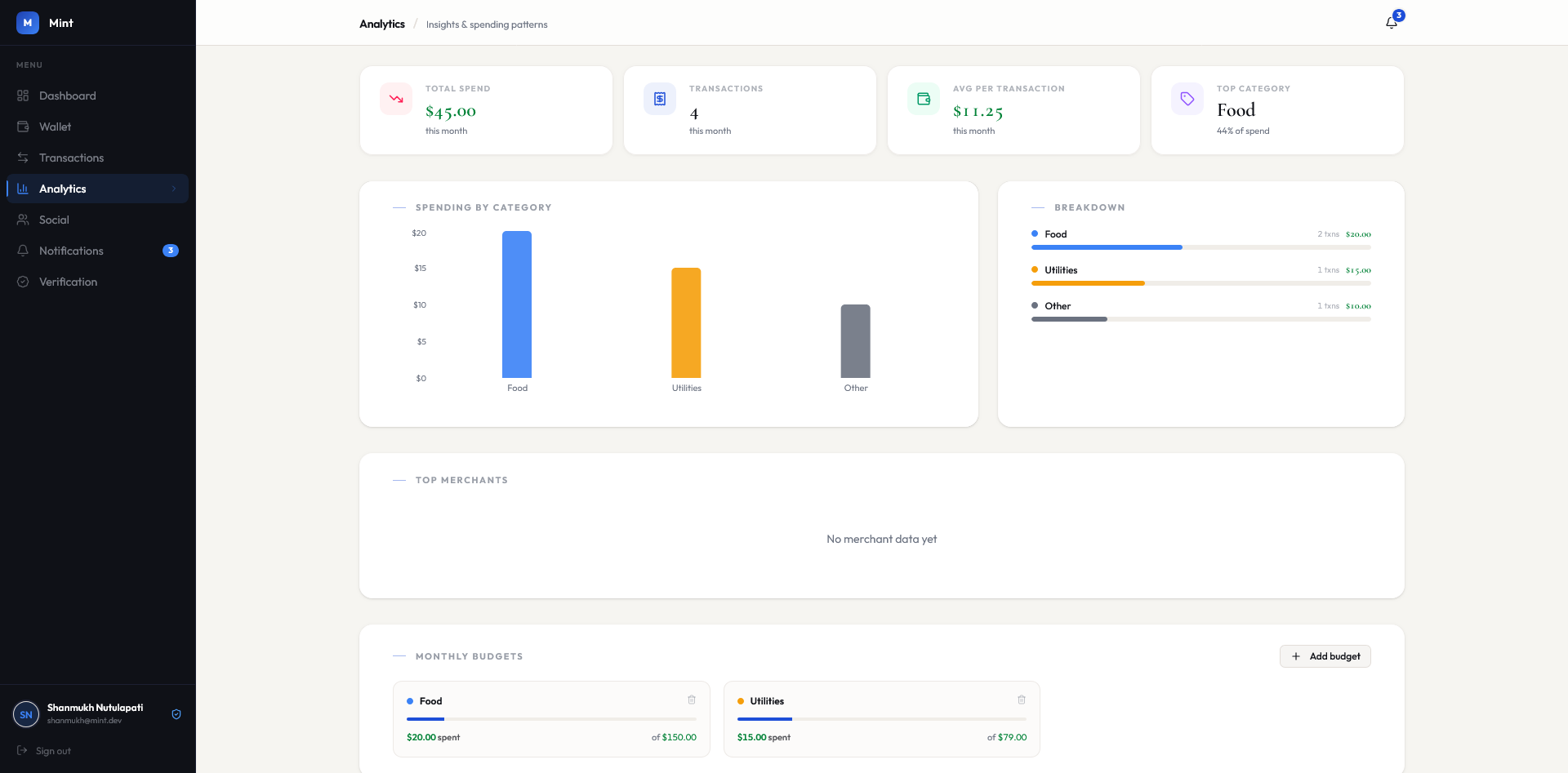

Analytics & Budgets

- Spend Categorisation - Completed transactions are auto-classified into FOOD, TRANSPORT, ENTERTAINMENT, UTILITIES, OTHER by merchant name and description matching

- Monthly Aggregates - Category totals and top merchants updated in real-time from Kafka events

- Budget Alerts - Users set per-category budgets; the analytics service publishes a Kafka event when a threshold is crossed, triggering a notification

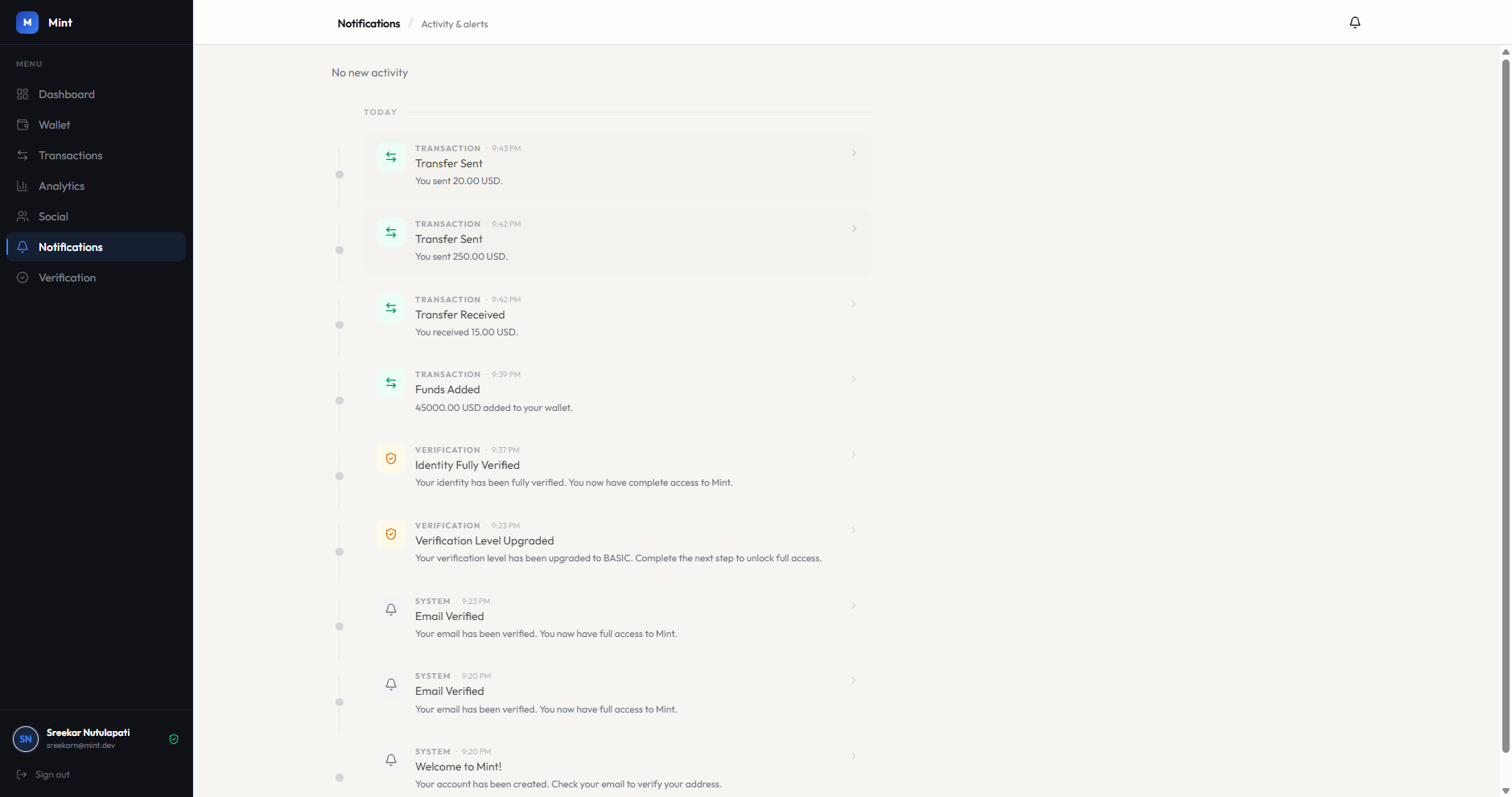

Notifications & Webhooks

- Real-Time SSE - In-app notification stream over Server-Sent Events; nginx disables proxy buffering so events land immediately

- Persistent Feed - Notifications stored in DB, readable as a paginated list with read/unread state

- User Webhooks - Users register HTTPS endpoints and subscribe to event types; payloads are HMAC-signed, delivered with exponential backoff retry via BullMQ

Admin Console

- User Management - Search, freeze/unfreeze accounts, update roles

- KYC Review Queue - Inspect submitted documents, approve or reject (emits Kafka event -> KYC service updates tier)

- Fraud Review Queue - Review REVIEW-scored transactions, approve or block

- Transaction Operations - List, reverse, or force-complete stuck transactions

- Global Limits - Configure platform-wide transaction limits without a deployment

Audit Log

- Immutable by Design - PostgreSQL trigger prevents UPDATE and DELETE on the audit table; every service publishes to

audit.events - Full Traceability - Each entry preserves the OpenTelemetry trace ID so any log entry can be correlated back to the original distributed trace in Grafana

Observability

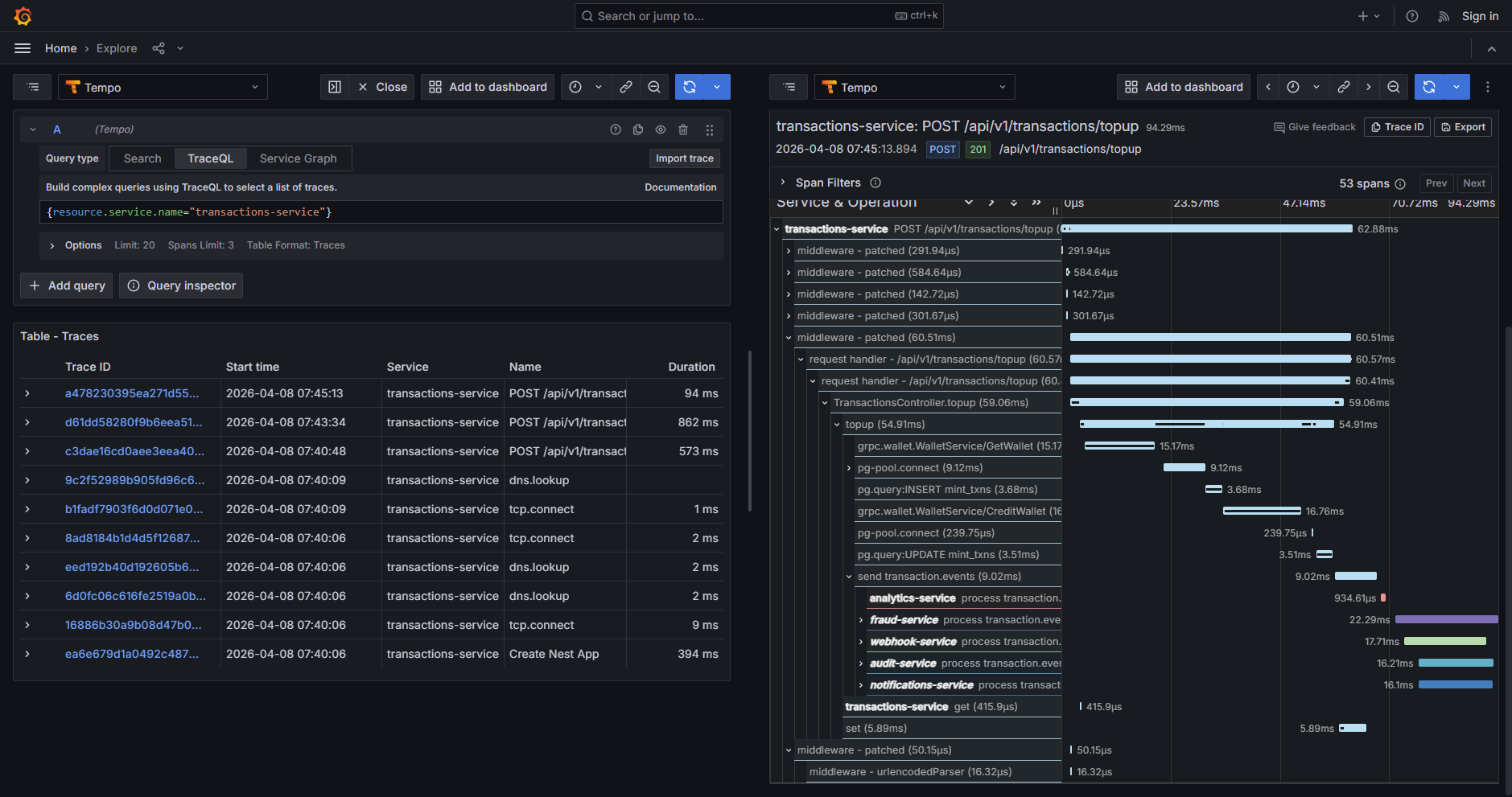

- Distributed Tracing - Every service ships OTLP traces to a local collector -> Grafana Tempo. A single top-up produces one trace spanning fraud scoring, KYC checks, wallet settlement, and all Kafka consumers

- Trace Propagation - W3C Trace Context over HTTP, gRPC metadata for gRPC calls, custom

KafkaTraceInterceptorwrites/reads trace headers on Kafka messages so async boundaries don’t break the trace

Architecture

graph TD Client -->|HTTP| nginx nginx -->|/| web nginx -->|/app-admin| web nginx -->|/api/v1/auth| auth nginx -->|/api/v1/wallet| wallet nginx -->|/api/v1/transactions| transactions nginx -->|/api/v1/kyc| kyc nginx -->|/api/v1/analytics| analytics nginx -->|/api/v1/notifications SSE| notifications nginx -->|/api/v1/social| social nginx -->|/api/v1/webhooks| webhook nginx -->|/admin| admin transactions -->|gRPC :50052| fraud transactions -->|gRPC :50053| kyc transactions -->|gRPC :50051| wallet admin -->|gRPC :50053| kyc admin -->|gRPC :50051| wallet admin -->|gRPC :50052| fraud

Communication Patterns

Mint uses two distinct internal communication patterns depending on whether the caller needs a synchronous result.

Synchronous - gRPC is used when the caller must have a result before continuing:

| Caller | Target | Purpose |

|---|---|---|

| transactions | fraud | Score every transaction before settlement |

| transactions | kyc | Check per-transaction and daily/monthly limits |

| transactions | wallet | Debit sender, credit recipient atomically |

| admin | kyc | Fetch KYC profiles and pending review queue |

| admin | wallet | Fetch wallet status for user management |

| admin | fraud | Fetch and action fraud cases |

Asynchronous - Kafka is used for fan-out side effects where the producer doesn’t need to wait:

| Topic | Key Consumers |

|---|---|

auth.events | wallet (create), kyc (create profile), notifications, audit |

transaction.events | analytics, notifications, webhook, audit |

wallet.events | notifications, webhook, audit |

kyc.events | notifications, audit |

social.events | transactions (auto-transfer on accept), notifications, audit |

analytics.events | notifications (budget alerts), webhook |

admin.events | kyc (approve/reject tier), audit |

Services

| Service | Stack | Port | Description |

|---|---|---|---|

| web | Next.js 15 | 3000 | User app and admin console |

| auth | FastAPI | 4001 | JWT issuance, refresh, RBAC |

| wallet | FastAPI + gRPC | 4002 / 50051 | Balance management, atomic settlement |

| transactions | NestJS | 4003 | Transfers, top-ups, idempotency |

| fraud | NestJS (gRPC only) | 50052 | Real-time rules-based fraud scoring |

| kyc | NestJS + gRPC | 4004 / 50053 | Document upload, tier management |

| analytics | NestJS | 4005 | Spend insights, category budgets |

| notifications | NestJS | 4006 | Persistent notifications + SSE stream |

| social | NestJS | 4007 | Contacts, money requests, bill splits |

| webhook | NestJS | 4008 | User-registered webhooks + delivery log |

| admin | NestJS | 4009 | Admin console API |

| audit | NestJS | 4010 | Immutable append-only audit log |

Getting Started

# Clone the repository

git clone https://github.com/sreekarnv/mint.git

cd mint

# Generate RSA keys (first time only)

sh scripts/generate-keys.sh

# Start all services

docker compose -f docker-compose.dev.yml up -d --build

# Create an admin user

docker exec -it mint-auth uv run python /app/apps/auth/src/create_admin.py \

--email admin@mint.dev \

--password adminpass \

--name "Admin User"The user app is at http://localhost and the admin console at http://localhost/app-admin. Grafana traces are at http://localhost:4000.

What I Learned

Building Mint at this scale forced me to confront the real costs of distributed systems:

- Two communication protocols serve different needs - gRPC for synchronous calls where you need a result (fraud scoring, limit checks, settlement) and Kafka for async fan-out where you don’t. Conflating them leads to either tight coupling or unnecessary latency

- Polyglot is genuinely useful, but the integration surface is the hard part - Python services handle the stateful, mutation-heavy wallet and auth work well, but wiring gRPC stubs, shared proto definitions, and trace propagation across runtimes requires discipline

- Observability must be designed in, not bolted on - Getting a single trace to span HTTP -> gRPC -> Kafka required explicit propagation at every boundary. Without it, async failures are nearly impossible to debug in production

- Idempotency is not optional in financial systems - Network retries are guaranteed to happen; building safe retry handling from the start saved considerable pain later

- The audit log is the source of truth - Having an immutable, append-only log that every service writes to independently made debugging event ordering issues far easier than reading application logs